Interview by Estela Oliva

Composer and performer Jennifer Walshe has been coined by the Irish Times as the ‘most original compositional voice to emerge from Ireland in the past 20 years’. From commissions, broadcasts and performances, her work has been seen, played and awarded all over the world. In recent years, Jennifer has been researching artificial intelligence (AI) and its potential in experimental music and performance, something we have seen steadily increasing in recent years.

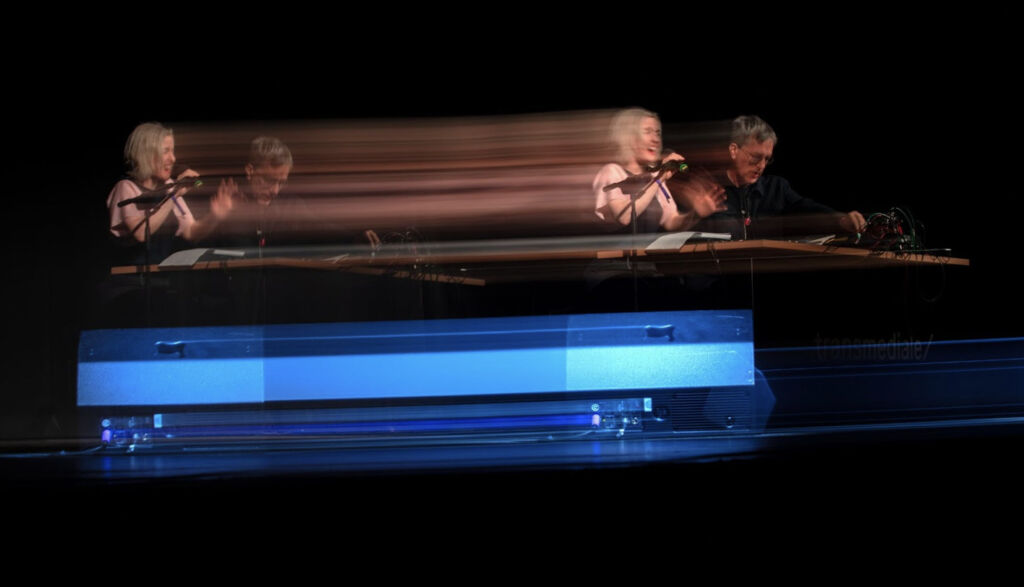

This year’s edition of transmediale Festival 2023 in Berlin brought together a new performance using AI by Jennifer Walshe with musical collaborator, composer Jon Leidecker, who has been producing music under the name Wobbly since 1990. The artists came together to respond to the main festival theme of questioning the paradoxes of scale in our current socioeconomic environment.

We spoke with the artists about their collaboration and their work named MILLIONS OF EXPERIENCES (HUGE IF TRUE), which was performed during transmediale at Akademie der Kunst in collaboration with an ensemble of musicians featuring Owen Gardner, Chris Heenan, Jana Luksts, Kanae Mizobuchi, Weston Olencki and Andrea Parkins.

Together they created a very unique spectacle, an ‘experiment’ as Walshe herself called it, blurring the boundaries between human and machine through a combination of live voices, machine-made loops played with real instruments, AI-generated Enya, and an archive of voice samples to unsettle ideas of what is purely technological and purely human.

How does collaboration with other artists fit into your practice, and why is it important?

Jon Leidecker: If composition is usually the sum of everything you already know, improvising is how you learn new things, so collaboration has only become more important the further along I go. Surprises never feel like mistakes when I’m playing with Jennifer.

Jennifer Walshe: I’ve had lots of close collaborators, and what’s exciting to me about each formation is that with each person, you find the unique coordinates of the territory you’re both interested in exploring. It’s a real joy to find someone who can push you further into that territory. Our chat history is very vivid evidence of our shared interests…

When did you start collaborating? And what are you aiming to get?

Jon Leidecker: Our mutual friend Vicki Bennett brought us (along with M.C. Schmidt) to Manchester to interpret her work ‘Notations’, and it clicked from the drop. Right now, each show magically emerges like a fully formed yet radically different version of the same schizoid piece of music – the elements are always different, but the whole is the same.

Jennifer Walshe: I remember playing that show very well; during one part, I thought I was singing with a sample from a cheesy exotica album, and it felt so organic and sat perfectly in my voice. After I asked Jon what record he was using, he held up a very old iPhone and said, “Oh, I just held this up and sampled your voice as you were singing.” So right from the start, there was this melding of pre-recorded samples and live samples, which could all intermingle.

For the occasion of the transmediale 2023 commission, was this collaboration different from previous times?

Jon Leidecker: I have a long-running piece called Monitress1, which explores the implications of these fun little pitch-tracking apps that run on my iPhone. You turn on the microphone, and it converts what it hears to a tune, which it then plays on a synth – singing along. It’s often a solo piece, but it really takes off in concert with Jennifer – anything she sings, from ‘Danny Boy’ to hysterical screaming, the machines find the melody in it and sing along in style.

So transmediale gave us an opportunity to do this with a larger ensemble, adding six additional improvisers to the mix – Jennifer conducted the musicians, and I processed their sounds. It’s a baffling sound when musicians are clearly playing with spontaneous abandon, and the synths are locked in as if they’re playing a written score. This is what they’re pretty much doing – thanks to machine listening. You only need 20 milliseconds in order to write that score and learn it. It’s a strikingly meaningful sound. None of this would have worked without Jennifer’s long experience with improvised conduction; you need a certain confidence to lead an ensemble through with the right balance of structure and freedom.

Jennifer Walshe: This was an opportunity to go really big – to integrate six amazing musicians into our process and to use conduction live to direct everyone so that they were operating as an extension of our duo. The people we chose – Owen Gardner, Chris Heenan, Jana Luksts, Kanae Mizobuchi, Weston Olencki and Andrea Parkins – are all expert improvisers with a wide range of different practices.

In MILLIONS OF EXPERIENCES (HUGE IF TRUE), you explore the process of scaling and descaling. Could you tell us more about how you addressed this? And what were your findings?

Jennifer Walshe: To “scale” something means to maximise profit while minimising investment (or better yet, not needing to involve any investment). When Nora O Murchú told us that “scale” was going to be the theme for transmediale, I was struck by the fact that on a very practical level, Jon & my collaboration works because we use technology to scale. We are only two people, yet we can use a variety of electronic devices to do a richly-textured musical performance.

I liked the idea that we could “descale” or “unscale” our show by expanding the performing forces to eight people instead of two and by asking these extra musicians to do a lot of the sound-making and processes Jon and I would normally use technology to carry out.

The result is that MILLIONS OF EXPERIENCES (HUGE IF TRUE) is a conduction piece. There’s a detailed list of rules, and during the performance, I use hand signals and cards to indicate to the musician’s different things I want them to do. Every conduction system – every conduction piece – has its own philosophy, its own approach to sound.

MILLIONS OF EXPERIENCES (HUGE IF TRUE) is concerned with having the musicians carry out modes of behaviour & listening, processes and material which Jon and I normally do as a duo, so the directives are translations of our idiosyncratic approach and required lots of reflection to develop – listening through to recordings of our live sets, and analysing what we did with a view to being able to distil our mode of working down and make it explainable to other people.

Shout out to the students in my Free Improvisation class at the University of Oxford – we test-ran an early version of the conduction directions on them, which was very helpful!

I also asked the musicians to do some things not so common in conducting work. For example – I asked Jana Luksts (piano) and Kanae Mizobuchi (voice) to learn some of my pre-existing music so that I could cue them to play this material live in performance and mess with it. Among others, Jana and Kanae learned my piece Trí Amhrán2, a series of three songs (in Irish!) made using AI.

I could trigger individual movements, pause, change the tempo/dynamic etc, live using hand signals. I have often used Trí Amhrán as a sample in live shows with Jon (and we use all three songs in our hörspiel LIMITLESS POTENTIAL3), but to be able to trigger real live humans, to play it and mess with it using hand signals is a way richer experience!

Aside from this performance, there was another work presented at the festival, MOREOVER. Could you tell us more about how it differed from MILLIONS OF EXPERIENCES (HUGE IF TRUE)?

Jon Leidecker: MOROVER is a work in progress in which all of our constant international texting about what technology is doing to us gets turned into music. The texting grew out of a shared sense of humour, obsessively going through interviews with tech startup founders and Silicon Valley CEOs. There’s nothing like hearing billionaires grapple with the existential issues being unleashed by AI, just long enough to dismiss them when the subject turns back to business.

There’s been some overlap in our projects, and my work with Negativland, as that band’s long history with meta-media collage and digital intellectual property issues, are all being revisited anew by AI (notably, with the political ideologies reversed – it’s now the corporate CEOs preparing Fair Use arguments to defend their data scraping, and the young digital artists talking about privacy and ownership rights).

With Negativland4, we sample those CEO quotes directly – with Jennifer, those quotes also wind up in her notebooks, which she uses live as a source – it turns out CEO & EA musings make for an excellent libretto. Our deliverable is the ecosystem itself! Image diversity is more useful than photorealism! Sometimes the original sample is unbeatable, such as when Sam Altman’s voice falters when he says he feels terrible that AI is the reason his Rationalist friends have decided not to have kids. He thinks in the future, so many jobs will be lost to AI that our economy will be forced to come up with new solutions.

Perhaps they think about these ethical quandaries, but answering them isn’t exactly their department. So MOREOVER has more of a ripped-from-our-news-feed collage approach, which sometimes coalesces into songs and monologues5.

Jennifer Walshe: As a duo, we do very much nerd out on anything tech/ML-AI-related – I think one of the things we first bonded over was our love of the vocal files attached to the 2016 Wavenets paper6. That’s a bit niche for many other people, but we are fascinated by it, so it all goes into our performances.

So it was a beautiful experience to do MOREOVER on Sunday night after having had the excess of MILLIONS OF EXPERIENCES (HUGE IF TRUE) because each experience allowed us to go deeper into the territory in different ways, to see what it’s like to return to the duo-territory after the ensemble experience…

In your work, you’ve used AI tools to integrate musical narratives from machine learning, for example, the Enya-generated voice or the archives of voice samples. Could you tell us more about this part of the process and what it means to you?

Jennifer Walshe: The process of generation is fascinating to me because you get to learn a lot about how the networks function. A lot of the AI-generated works (I should more correctly say Machine Learning-generated works, but let’s stick with AI as it’s the more commonly-used term at the moment) you might see today are the products of very long processes of development.

You don’t see the results of all the different training epochs, the missteps the network made, the weird cul-de-sacs it ended up in. Witnessing and understanding that process is really important to me. When I made A Late Anthology of Early Music Vol. 1: Ancient to Renaissance7 (2020), I was working with over 800 training outputs which Dadabots very kindly generated for me by training their version of Sample-RNN on my voice. I was able to hear the network gradually and learn to iterate my voice, and I mapped that process onto the early history of Western music.

Similarly with ULTRACHUNK8 (2018), I made the training dataset – a year’s worth of videos of myself improvising into my webcam – so I had a very concrete sense of exactly what was in there. Memo Akten and I collaborated to make the project – I made the dataset, and he coded the extremely complex architecture to be able to use AI to generate video & audio of me improvising live. Going through the different iterations of that and then being able to improvise live with this me-not-me para-Jen was a fascinating process.

Jon Leidecker: What I love most about ULTRACHUNK is its real-time interactivity of it; it’s reacting to you quickly enough that you can truly react right back. Real musical performance is about listening, and up until now, recordings haven’t been able to listen back. That’s kind of been the Faustian bargain since electronic music broke out in the 50s – you could have any imaginable sound, but what you’d have at the end was a tape, a dead object.

So what’s performance? We’ve gotten used to it, learned to play to click tracks, and added synchronized visuals on huge screens… but no matter how great the tape sounds, it’s always going to be odd when something making that much noise is also totally deaf. Good music always involves listening. The tech I use is much less bleeding edge than Jennifer’s. Pitch-tracking algorithms have been around for half a century already.

But that’s just it – the machines have been listening to us for a while now. These sounds may be abstract, but they’re also not a metaphor for anything. They are literally the sound of my iPhone’s built-in microphone listening in to us.

You have been working with AI for a while. What are your thoughts about the future of AI, especially in the context of arts and music?

Jon Leidecker: We’re talking about too much of this as if it is new. Electronic music has been dealing with issues of generative music and cybernetics since the 1940s, with Louis and Bebe Barron working out the creative potential of these new tools, making self-playing instruments capable of observing their own behaviour. I take the core questions faced by creative electronic musicians to involve issues of automation. What can be automated that points one in unheard musical directions?

Can networks involve more people, as opposed to replacing them? What new roles open up for humans once the old decisions are being handled? Electronic music has over 70 years’ worth of deeply moral and very creative responses to the issue of automation, and these patent-chasing corporations aren’t likely to bring up any of that work during their product demos. They need you to believe they invented this. But there’s a long and helpful history, and there’s still time to learn it (the liner notes of my last album ‘Popular Monitress9’ pay homage to a lineage of composers that should be a lot better known, given the last 5 years of non-stop churn over the potential of this music). What I look for when listening to new music dealing with this subject are works that can be performed in real time, and works which find interesting new ways to keep Humans-in-the-Loop – new models for the human agency now that an increasing number of machines are in this loop as well. Otherwise, it doesn’t even matter how beautiful it is – it might as well just be a recording10.

Jennifer Walshe: I think MOREOVER is one of the key places I express joy, excitement, doubts, and fears. In 2018 I gave a talk11 at the Darmstädter Ferienkürse, where I stated in no uncertain terms that I felt AI was going to impact art and music massively, and it would be the dominant topic we would be dealing with.

Even as wildly optimistic/dystopic as I was then, I could not have predicted that only five years later, we’d have tools like Midjourney, Stable Diffusion, Dall-E, GPT-4, etc. – tools that are unbelievably easy to use and have been taken up enthusiastically by people who had little access or interest previously. As my Irish father would say, the current generation didn’t lick it off a stone; there’s a long history we can trace.

But even knowing that the current moment seems so charged. As a result, I find myself very drawn to writing about AI, whether in essays or fictional pieces where I can work on it in a speculative sci-fi mode. I’ve taken this approach in previous performance pieces about AI, like IS IT COOL TO TRY HARD NOW?12 (2017), or even works like THE SITE OF AN INVESTIGATION13 (2018), where I’m telling stories about AI trained on scraping of Gwyneth Paltrow’s Goop or a fake Jackson Pollock generated using machine learning. But over the last year, I’ve been trying to figure out my position on robotic babies14 and the Singularity15, and essays and short stories have been a good place to do that.

What’s next for you? Are you planning any more collaborations?

Jon Leidecker: Hopefully, we get to do that ensemble again soon, resources permitting. It’s going to be like nailing down Mercury because it’s so satisfyingly different every time we play it, but I would love for MOREOVER to finally become some kind of album-like thing.

Jennifer Walshe: Always! Playing and planning and messaging each other, speculating about what prompts were used in the AI-generated Biden attack ad…

You couldn’t live without…

Jon Leidecker: Patience!

Jennifer Walshe: Gaviscon!